Instagram Implements Stricter Safety Measures for Teen Users, Defaulting to PG-13 Content

Instagram is implementing new default PG-13 content restrictions and enhanced parental controls for users under 18, protecting teens from harmful material and limiting interactions.

Instagram introduces new restrictions for teen accounts, guided by PG-13 movie ratings

Instagram says teen accounts will only see PG-13 content

Instagram implements new automatic restriction for teens — here’s what they won’t see

Instagram Introduces New PG-13 Style Restrictions For Teen Accounts

Overview

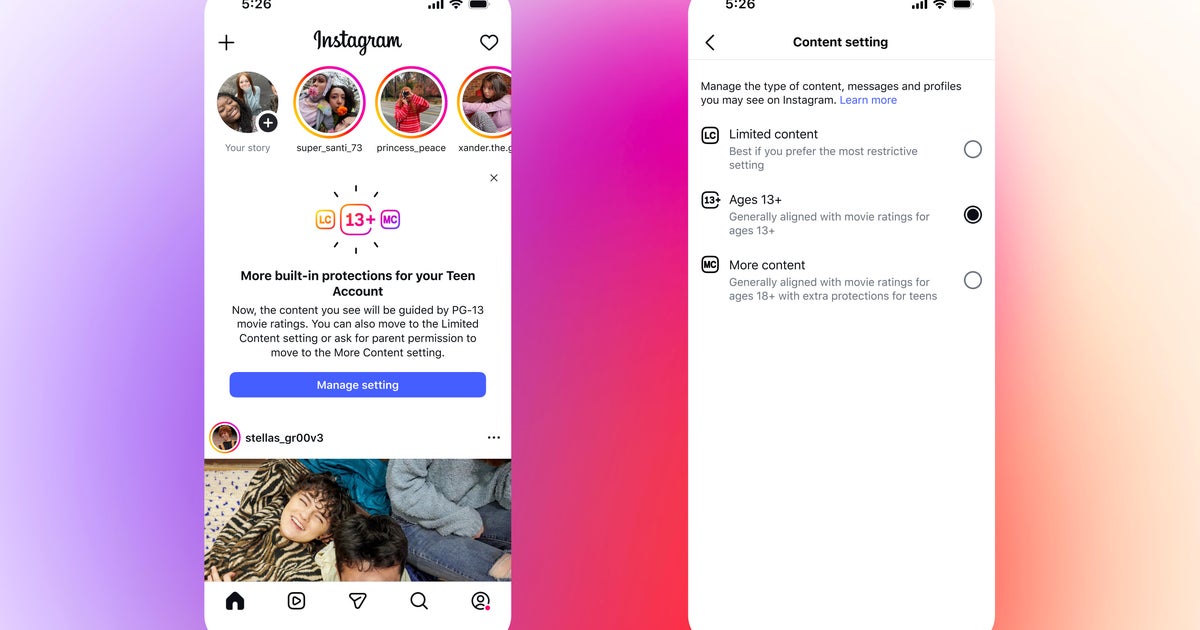

Meta's Instagram is implementing new default PG-13 content restrictions for all users under 18, requiring parental permission to adjust these settings and enhance safety for minors.

The platform will automatically place teen accounts into restrictive modes, limiting their ability to view or interact with content deemed inappropriate, including explicit material and risky behaviors.

New parental controls offer stricter options, blocking more content and restricting teens' interactions across features like Feeds, Stories, comments, and even AI conversations.

Instagram is expanding its blocked search terms to include sensitive topics like alcohol and gore, and will filter content from adult accounts sharing age-inappropriate material.

These enhanced protections for teen accounts began rolling out in the US, UK, Australia, and Canada, with full global implementation expected by the end of the year.

Analysis

Center-leaning sources frame Meta's Instagram safety updates with significant skepticism, emphasizing past failures and critics' doubts about the company's motives. They highlight concerns that these changes are a "PR stunt" aimed at forestalling legislation rather than genuinely protecting children, underscoring a narrative of corporate promises falling short.