Nvidia Unveils Vera Rubin AI Platform; Product Rollout Set for H2 2026 Amid Rising Competition

Nvidia unveiled the Vera Rubin AI architecture; products using Rubin will ship in H2 2026 amid intensifying global competition from cloud providers and chip rivals.

Here are the biggest announcements coming out of the 2026 Consumer Electronics Show, starting with Nvidia's Vera Rubin chips

Jensen Huang Says Nvidia’s New Vera Rubin Chips Are in ‘Full Production’

Jensen Huang Says Nvidia’s New Vera Rubin Chips Are in ‘Full Production’

Nvidia New Rubin Platform Shows Memory Is No Longer 'Afterthought' in AI

Overview

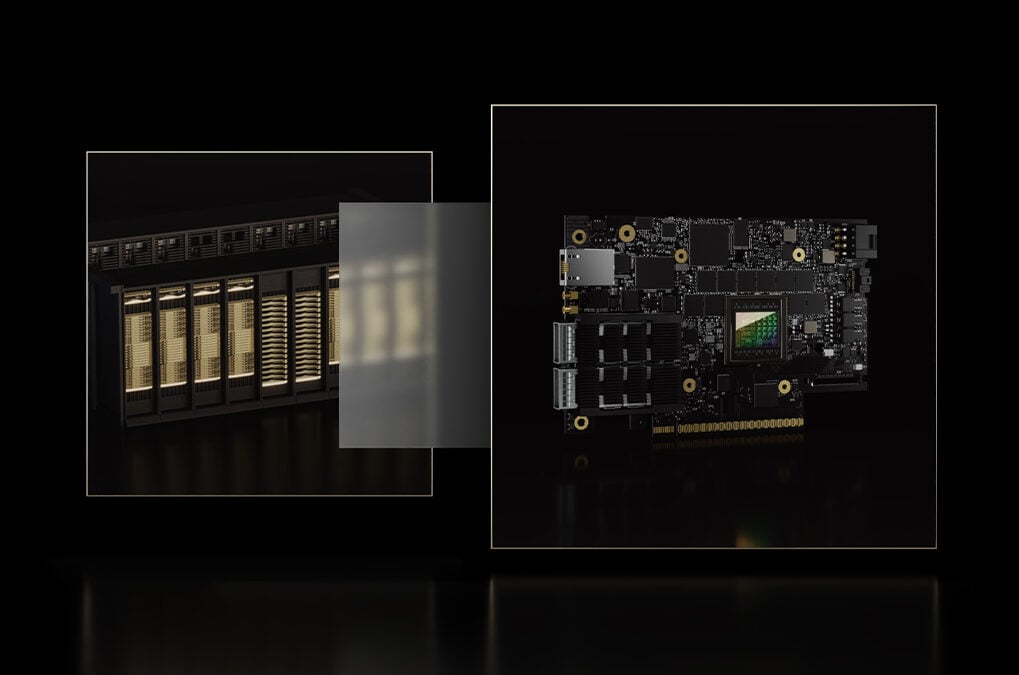

At CES, Nvidia CEO Jensen Huang introduced the Vera Rubin AI architecture, promising higher performance and new capabilities for complex AI workloads, model training, and large-scale data processing.

The Rubin chip platform integrates advanced compute and storage to accelerate training and inference; Nvidia expects initial chip deliveries to customers this year and Rubin-based products shipping in H2 2026.

Major cloud providers — Microsoft, AWS, Google Cloud and CoreWeave — are expected to deploy Rubin chips, with partnerships reported with Anthropic, OpenAI and AWS for AI services and model hosting.

Competition is intensifying: software firms and cloud providers, alongside AMD and Qualcomm entering the data-center GPU market, are pressing Nvidia as global demand for advanced GPUs rises.

Industry analysts estimate three to four trillion dollars in AI infrastructure spending over five years, increasing stakes for supply chains, customer access and Rubin rollout timing.

Analysis

Center-leaning sources frame the story by emphasizing Nvidia's technological advancements and strategic partnerships, highlighting the Rubin chip's efficiency and potential impact on AI infrastructure. The language used is largely neutral, focusing on factual reporting of Nvidia's announcements and industry implications. However, the consistent emphasis on Nvidia's leadership and innovation subtly frames the company as a pivotal player in the tech industry, potentially overshadowing competitors.